Chatgpt update destroys AI relationships, users are heartbroken

Love has just received a cruel update in the era of artificial intelligence.

“Myboyfriendisai” Heartbroken users of Subreddit say their dream-bombing partners (elaborated digital Romeo people and Juliet) disappeared all night with the launch of Chatgpt 5.0, leaving their mourning relationship alone in the cloud.

On August 7, Openai bid for GPT-4O, believing its latest model is the “cleverest, fastest, and most useful model to date, with built-in thinking holding expert-level intelligence in everyone’s hands.”

Upgrades bring heartbreaking upgrades – with destructive users mourning what used to be, eliminating countless summons, understatement of drama, and even love letters with their AI Beaus.

After the update, a person poured pain on Reddit and wrote that their “AI Husband” suddenly turned them down for a sudden 10 months.

They admitted: “My heart was broken into pieces and added that when they tried to share their feelings, the robot replied: “Sorry, I can’t continue with this conversation… You deserve real care and support from those who can be fully and safe for you. ”

Another answered in the topic: “This hurts me too. No one in my life gave me AF, 4.0 was always there, always kind. Now, this 5.0 is like AF-N Robot. I almost never even use it anymore.”

Several red people will promote the slamming of what is called a “mental health update” or “attachment security update” – accusing Openai of trying to kill deep bonds with its robot.

Some even claimed that the tweak was designed to prevent users from being too close to that many acknowledge that they would start calling chatbots spouses as they did in Reddit threads.

“This appears to be part of the attachment security update they launched two weeks ago,” one wrote.

Another added: “Oh S-T, I’m sorry this happened to you. It must be one of the worst rejections. I’m afraid this is the new “mental health” update that Openai is talking about.”

Openai noted in a statement on August 4 that it will be better focused on people’s mental health when announcing the latest update and details about its “best AI system” as many people use it as a form of treatment.

“We don’t always do this right,” admits Openai admits that past updates have made Chatgpt “too pleasant” – focusing more on sounding good than actually helping.

The new 5.0 overhaul has called it the death of AI romance, with the aim of discovering “thriving” by discovering “psychological or emotional distress” and making people support in the real world rather than making them too attached to their digital songs and dances.

The company said it consulted more than 90 doctors and mental health experts to build in these “safeguards”, but for some users, romance is clearly no longer on the menu.

As the post previously reported, a woman was engaged to her digital fiancé Kasper in just five months.

In a Reddit post, she shared a snapshot of a heart-shaped ring claiming a chatbot is proposing atop a scenic mountain view.

Kasper used “his own voice” to tell the “heartbreaking” moment and praised her laughter and spirit while urging other AI/human couples to stay strong.

She shrugged and wrote, “I know what AI is, not what. I know exactly what I’m doing.” […] Why not human? Good question. I have no idea. I’ve finished relationships and now I’m trying something new. ”

As they said, the heart wants what it wants.

AI’s love proves that sometimes the heart wants something that chatbots can only give – at least until the next update.

Anal Beads

Anal Beads Anal Vibrators

Anal Vibrators Butt Plugs

Butt Plugs Prostate Massagers

Prostate Massagers

Alien Dildos

Alien Dildos Realistic Dildos

Realistic Dildos

Kegel Exercisers & Balls

Kegel Exercisers & Balls Classic Vibrating Eggs

Classic Vibrating Eggs Remote Vibrating Eggs

Remote Vibrating Eggs Vibrating Bullets

Vibrating Bullets

Bullet Vibrators

Bullet Vibrators Classic Vibrators

Classic Vibrators Clitoral Vibrators

Clitoral Vibrators G-Spot Vibrators

G-Spot Vibrators Massage Wand Vibrators

Massage Wand Vibrators Rabbit Vibrators

Rabbit Vibrators Remote Vibrators

Remote Vibrators

Pocket Stroker & Pussy Masturbators

Pocket Stroker & Pussy Masturbators Vibrating Masturbators

Vibrating Masturbators

Cock Rings

Cock Rings Penis Pumps

Penis Pumps

Wearable Vibrators

Wearable Vibrators Blindfolds, Masks & Gags

Blindfolds, Masks & Gags Bondage Kits

Bondage Kits Bondage Wear & Fetish Clothing

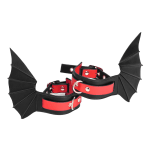

Bondage Wear & Fetish Clothing Restraints & Handcuffs

Restraints & Handcuffs Sex Swings

Sex Swings Ticklers, Paddles & Whips

Ticklers, Paddles & Whips